What Is Artificial Intelligence?

The formal definition from IBM’s research division frames it this way: AI is software that perceives its environment, processes that information, and takes actions to achieve a specific goal. The key word is learns. Classic software follows hard-coded rules: “if X happens, do Y.” AI systems learn patterns from data, which allows them to handle inputs the programmer never explicitly anticipated.

A practical way to think about it: when a spam filter correctly flags an email it has never seen before, that is AI at work. It learned what spam looks like from millions of examples, not from a list of forbidden words someone typed in manually.

The term was coined at the Dartmouth Conference in 1956, where John McCarthy, Marvin Minsky, and other founding researchers defined the field and set its ambition. The path from that summer workshop to today’s tools spans more than 70 years of progress, setbacks, and breakthroughs.

A Brief History: How We Got Here

Understanding where AI came from helps explain why it works the way it does today.

-

1950

The question is asked. Alan Turing published “Computing Machinery and Intelligence,” asking “Can machines think?” and proposing the Turing Test – a behavioral benchmark for machine intelligence. The paper shaped the field’s core questions for decades.

-

1956

The field is born. The Dartmouth Conference formally established AI as an academic discipline. Early researchers believed human-level intelligence could be achieved within a generation.

-

1966

The first chatbot. MIT researcher Joseph Weizenbaum created ELIZA, a program that mimicked a therapist. It had no genuine understanding, but it demonstrated that people would engage emotionally with a text-based interface.

-

1974–1993

Two AI winters. Funding and interest collapsed twice as ambitious promises ran into the limits of available computing power and data. These winters pruned the field of its most speculative branches and forced researchers to focus on solvable problems.

-

2012

The deep learning breakthrough. A neural network called AlexNet won the ImageNet image recognition competition by a margin that stunned the field. Deep learning quickly displaced older methods across nearly every AI application.

-

2017

The transformer. Researchers at Google published “Attention Is All You Need,” introducing the transformer architecture – the foundation for every major language model that followed, including GPT, Claude, and Gemini.

-

2022

AI becomes a household word. OpenAI released ChatGPT. It reached 100 million users faster than any consumer product in history – faster than TikTok, faster than Instagram. AI moved from a specialized research topic to a daily conversation.

-

2025–2026

The agentic shift. AI systems are no longer just answering questions. They are planning multi-step tasks, executing actions across software tools, and operating with growing autonomy. The current debate is not whether AI works but how much of your workflow to delegate to it.

How AI Actually Works

Machine Learning: Learning From Examples

Most modern AI is built on machine learning – a technique where a system learns patterns from data rather than following programmer-written rules.

The basic process:

- You collect a large dataset of labeled examples (photos labeled “cat” or “not cat,” emails labeled “spam” or “not spam,” etc.)

- You train a model on that data by showing it examples and gradually adjusting its internal parameters until it can predict the correct label

- You test it on new examples it has never seen

The model does not know why something is a cat. It has found statistical patterns in pixel arrangements that correlate with the label “cat.” That distinction matters – it explains many of AI’s quirks and failure modes.

Neural Networks: How the Learning Happens

Neural networks are the architecture that powers deep learning, the dominant form of machine learning today.

A neural network is composed of layers of simple mathematical functions called neurons. Each neuron takes a set of inputs, multiplies each by a learned weight (a number representing importance), adds them up, and passes the result through an activation function. Thousands or millions of these neurons are connected in layers.

The learning process – called backpropagation – works by comparing the network’s output to the correct answer and nudging all the weights slightly in the direction that reduces the error. Repeat this across millions of examples and you get a system that has internalized surprisingly sophisticated patterns.

To use an analogy from AI educator 3Blue1Brown: imagine deciding whether to carry an umbrella. You mentally weigh factors – cloudiness, humidity, yesterday’s forecast – assigning more importance to some than others. That is the intuition behind a neuron. Neural networks run this process across millions of parameters simultaneously.

Large Language Models and Generative AI

Large language models (LLMs) take neural networks and scale them to an extreme degree. GPT-3, released by OpenAI in 2020, had 175 billion parameters – the equivalent of 175 billion adjustable knobs the network learned to tune. Current frontier models are estimated to be significantly larger.

LLMs are trained on enormous text datasets to predict the next word in a sequence. That sounds simple, but at sufficient scale it produces a system that can write coherent essays, explain technical concepts, translate languages, summarize documents, and generate code.

Generative AI is the broader category: any AI that generates new content – text, images, audio, or video – rather than just classifying existing content. LLMs are generative AI for text. Diffusion models (the technology behind image generators like DALL-E and Midjourney) are generative AI for images.

Types of AI: What Exists and What Does Not

One of the most important distinctions in AI is between capability levels. Researchers typically describe three:

Narrow AI (Weak AI)

Designed to excel at one specific task or a tightly related cluster of tasks. Every AI system deployed commercially today is narrow AI. A chess engine is extraordinary at chess and useless at everything else. A voice assistant recognizes speech commands but cannot reason about genuinely novel situations. Narrow AI can appear impressively broad – a chatbot can discuss philosophy, write a poem, and debug code – but it is doing pattern matching, not applying flexible human judgment.

Artificial General Intelligence (AGI)

The research goal of building a system that matches human cognitive flexibility: able to learn a new skill without forgetting old ones, transfer knowledge across domains, and reason about genuinely novel situations. Despite widespread media coverage suggesting AGI is imminent, there is no scientific consensus on a timeline, and no current system meets the definition.

Artificial Superintelligence (ASI)

A system that would surpass human intelligence across all cognitive domains. This remains entirely theoretical and is not a near-term engineering problem – it is a philosophical and safety discussion about a future that may or may not arrive.

The Main AI Disciplines You Will Encounter

The AI field contains several overlapping subdisciplines. Here are the ones that appear most often outside of academic contexts:

| Discipline | What It Does | Examples |

|---|---|---|

| Machine Learning | Systems that learn patterns from data. Almost all modern AI uses ML. | Recommendation engines, fraud detection |

| Deep Learning | ML built on deep neural networks with many layers. | Image recognition, speech recognition, language models |

| Natural Language Processing | AI that understands and generates human language. | Chatbots, translation tools, summarizers |

| Computer Vision | AI that interprets images and video. | Medical imaging, autonomous vehicles, quality control |

| Reinforcement Learning | An agent learns by receiving rewards or penalties for actions. | AlphaGo, AlphaZero, robotics control |

| Generative AI | AI that produces new content rather than classifying existing content. | ChatGPT, DALL-E, Sora, Midjourney |

AI Chatbots: The Interface Most People Use

For most people, their first direct experience with AI is through a chatbot – a text or voice interface powered by a large language model. The leading options as of early 2026:

These tools are not interchangeable – each has genuine strengths and consistent weaknesses. For a direct comparison, see our guide: Claude AI vs ChatGPT: Which Should You Use?

Where AI Is Used Right Now

Healthcare

Hospitals and health systems have been among the earliest enterprise adopters. According to a 2025 report from Unified AI Hub, approximately 80% of hospitals have incorporated AI, with the US AI healthcare market projected to grow from $7.72 billion in 2024 to nearly $100 billion by 2033.

The FDA had cleared approximately 950 medical devices using AI or machine learning as of August 2024. The most deployed applications are in radiology (detecting anomalies in X-rays and CT scans) and clinical documentation – tools like Microsoft’s Dragon Copilot that transcribe consultations and generate structured notes, reportedly saving up to 40% of time on patient record reviews.

Finance

Banks invested approximately $21 billion in AI in 2023. Common applications include fraud detection in real-time transaction monitoring, automated loan underwriting (reducing processing time from days to minutes), and market analysis tools that surface patterns across large datasets.

Education

AI tutoring tools, automated grading systems, and adaptive learning platforms are entering classrooms at an accelerating pace. Global spending on AI in education is forecast to surpass $32 billion by 2030.

Software Development

GitHub Copilot, which draws context from a developer’s codebase and suggests completions, has been adopted widely in professional engineering teams. The tool supports code generation, documentation, refactoring, and test writing. It offers a free tier with paid plans for individuals and teams.

Everyday Consumer Tools

- Search engines (ranking, query understanding, featured snippets)

- Recommendation systems (YouTube, Netflix, Spotify)

- Voice assistants (Siri, Alexa, Google Assistant)

- Email spam and phishing filters

- Navigation apps (real-time traffic prediction)

- Autocomplete in messaging and email

The Business Reality: Where AI Investment Stands

The more telling constraint is organizational, not technical. According to McKinsey survey data from 2025, only 64% of organizations are actively mitigating AI inaccuracy risks, 63% are addressing regulatory compliance, and 60% are working on cybersecurity concerns – despite most leadership acknowledging those risks exist. The deployment gap between what AI can do and what organizations have safely integrated is the defining challenge of 2025–2026.

What AI Cannot Do: Real Limitations

Understanding AI’s limits is as important as understanding its capabilities.

-

Hallucination

LLMs generate plausible-sounding text by predicting what words should come next – they do not retrieve facts from a verified database. When the training data does not contain a reliable answer, the model may generate a confident but incorrect one. This is a structural property of the technology, not a bug that will simply be patched away, though newer architectures with retrieval capabilities reduce its frequency.

-

Bias from training data

AI systems learn from human-generated data, which carries human biases. A hiring algorithm trained on historical decisions may replicate those decisions’ biases. The 2025 AI Index Report from Stanford’s HAI documented ongoing research into implicit bias in models, including racial classification issues in multimodal systems.

-

No genuine understanding

AI systems recognize patterns; they do not reason about the world the way humans do. An LLM can generate a correct proof of a mathematical theorem and also generate a plausible-looking incorrect one – it has no internal sense of which is which without external verification mechanisms.

-

Privacy exposure

Training data and inference logs can contain personal information. Membership inference attacks – techniques that probe whether a specific data record was in a model’s training set – are an active area of security research.

-

AI incidents are rising

The AI Incident Database recorded 233 reported incidents in 2024, a 56.4% increase over 2023. This is not a reason to avoid AI tools, but it is a reason to maintain human oversight in high-stakes decisions.

AI Ethics and Governance: Why It Matters

The rapid deployment of AI has outpaced the development of frameworks for its safe use. Several areas of ongoing debate:

Transparency: Who is responsible when an AI system makes a harmful decision? Many deployed models are black boxes – their internal reasoning is not interpretable even by their creators.

Regulation: The EU AI Act, which began phasing in during 2024, is the most comprehensive regulatory framework so far. It classifies AI applications by risk level and imposes different requirements accordingly. Other jurisdictions are developing their own frameworks.

Labor and displacement: AI automation is already changing workforce requirements in writing, coding, customer service, and data analysis. The economic effects are real, though the direction – net job creation or net displacement – is genuinely contested among economists.

Concentration of capability: The largest AI models require computational resources that only a handful of organizations can afford. This concentration has implications for who controls the technology and on what terms others can access it.

What Comes Next: The Trajectory in 2026

Several directions are shaping the near-term development of AI:

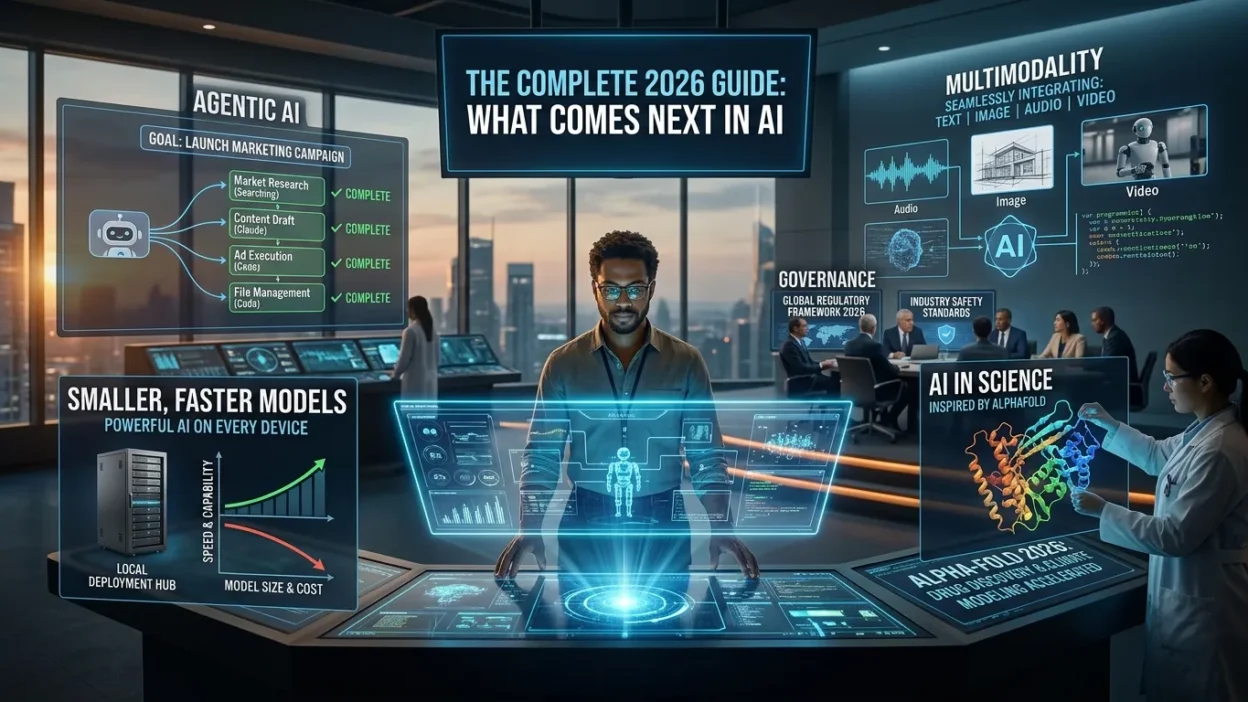

Agentic AI. Rather than responding to single prompts, AI systems are increasingly designed to break a goal into steps, use tools (web search, code execution, file management), and complete multi-step tasks with minimal human direction. This represents a qualitative shift in what AI is being asked to do.

Multimodality. Frontier models are no longer text-only. GPT-4o, Claude, and Gemini can process and generate combinations of text, images, audio, and – in some cases – video. This expands the range of tasks AI can assist with and blurs the boundary between narrow AI categories.

Smaller, faster models. The field is not only pursuing larger models. Significant research effort is going into making capable models that run on consumer hardware or at lower cost – crucial for broad deployment.

AI in science. Google DeepMind’s AlphaFold has already had measurable impact on protein structure research. AI tools are being applied in drug discovery, materials science, and climate modeling, with the potential to accelerate research that would take human scientists much longer.

Governance. Regulatory frameworks, industry safety standards, and organizational AI policies are developing in parallel with the technology. How well governance keeps pace with capability will be a significant story of the next several years.

Frequently Asked Questions

What is artificial intelligence in simple terms?

Artificial intelligence is software that can learn from data and make decisions or produce outputs – like text, images, or predictions – without being given explicit rules for every situation. The computer figures out patterns from examples rather than following a fixed script.

What is the difference between AI and machine learning?

Machine learning is a subset of AI. AI is the broad goal of making computers behave intelligently. Machine learning is one method for achieving that goal: letting a system learn patterns from data rather than programming every rule by hand. Not all AI uses machine learning, but most modern AI does.

What is an AI chatbot?

An AI chatbot is a program that can hold a text or voice conversation with a human. Modern AI chatbots like ChatGPT, Claude, and Gemini use large language models – neural networks trained on vast amounts of text – to generate contextually relevant replies, answer questions, and complete tasks.

What is generative AI?

Generative AI is a type of artificial intelligence that produces new content – text, images, audio, video, or code – rather than simply classifying or analyzing existing data. It learns statistical patterns from large datasets and uses them to generate original outputs.

Does true artificial general intelligence exist yet?

No. Every AI system deployed today is narrow AI: highly capable within a specific domain but unable to transfer that capability freely to unrelated tasks. Artificial general intelligence – a system that matches human cognitive flexibility – remains a research goal without a clear timeline.

What are the biggest risks of AI?

The most documented risks include hallucination (generating plausible but false information), bias inherited from training data, privacy exposure, and the potential for misuse in disinformation or cyberattacks. The AI Incident Database recorded 233 reported AI incidents in 2024, up 56% from the previous year.

How is AI used in everyday life?

AI is embedded in tools most people use daily: search engine ranking, spam filters, voice assistants, navigation apps, streaming recommendations, autocomplete in email and messaging, and fraud detection on payment cards. Most people interact with AI dozens of times a day without consciously recognizing it.

What is the best AI chatbot to use?

The answer depends on your use case. ChatGPT is strong for business analysis and breadth. Claude is consistently rated for writing quality and instruction-following. Gemini is practical if you are already embedded in Google’s ecosystem. All three have free tiers.